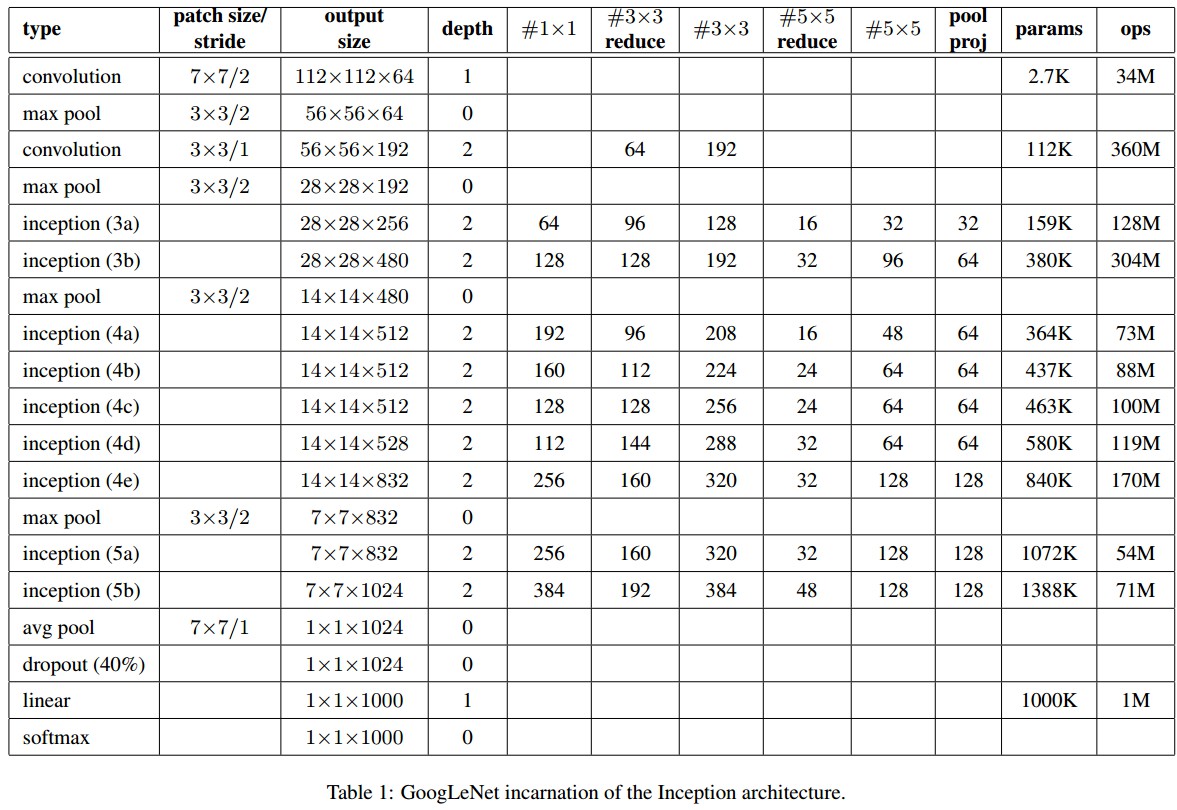

GoogleNet - Going deeper with convolutions - 2014

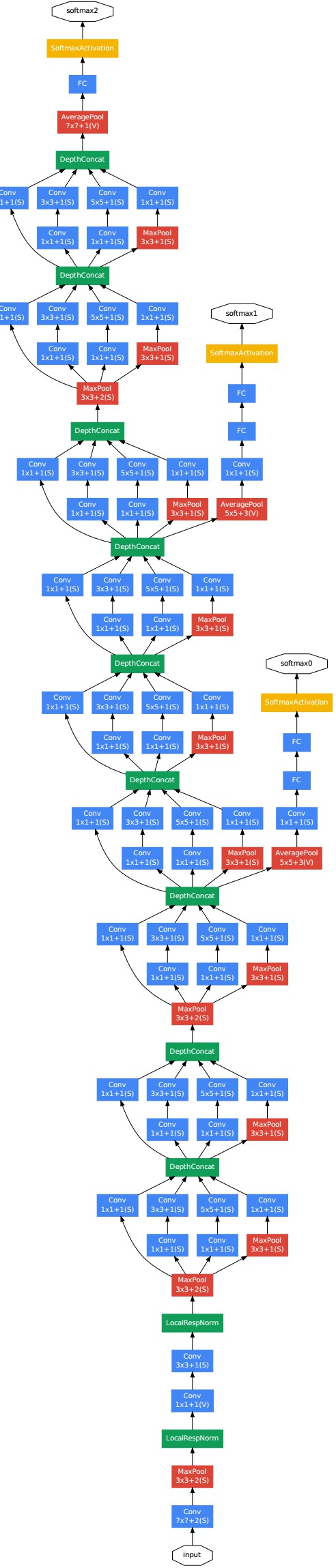

GoogleNet,即 Inception V1 网络结构,包含 9 个 Inception 结构:

GoogleNet - Netscope

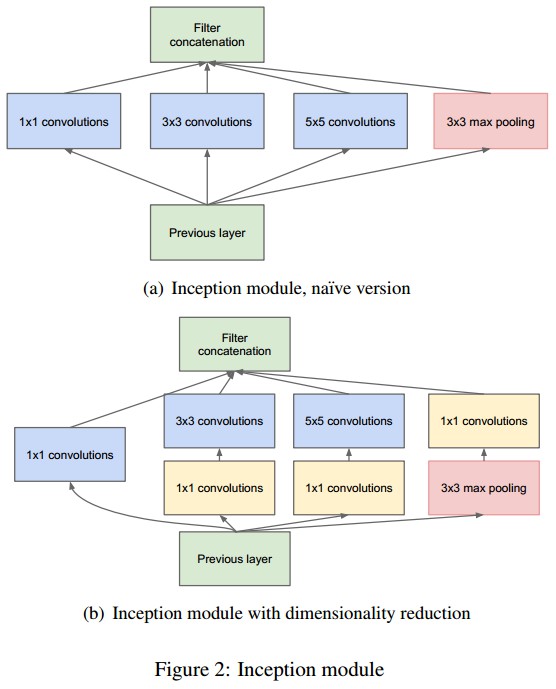

Inception 结构(网络宽度):

每个 Inception 结构有 4 个分支,主要包含 1x1, 3x3, 5x5 卷积核和 max pooling 操作(pooling 的步长为 1,以保持输出特征层的尺寸与卷积核输出尺寸一致). 1x1 卷积核核的作用是降维,以避免 cancatenation 操作导致特征层过深,并减少网络参数.

Tensorflow Slim 的 Inception V1 定义

Tensorflow Slim 中的 Inception V1 里采用的卷积核只有 1x1 和 3x3 两种,没有 5x5 的卷积核.

"""

Inception V1 分类网络的定义.

"""

from __future__ import absolute_import

from __future__ import division

from __future__ import print_function

import tensorflow as tf

from nets import inception_utils

slim = tf.contrib.slim

trunc_normal = lambda stddev: tf.truncated_normal_initializer(0.0, stddev)

def inception_v1_base(inputs,

final_endpoint='Mixed_5c',

scope='InceptionV1'):

"""

Inception V1 基础结构定义.

参数:

inputs: Tensor,尺寸为 [batch_size, height, width, channels].

final_endpoint: 指定网络定义结束的节点endpoint,即网络深度.

候选值: ['Conv2d_1a_7x7', 'MaxPool_2a_3x3', 'Conv2d_2b_1x1',

'Conv2d_2c_3x3', 'MaxPool_3a_3x3', 'Mixed_3b', 'Mixed_3c',

'MaxPool_4a_3x3', 'Mixed_4b', 'Mixed_4c', 'Mixed_4d', 'Mixed_4e',

'Mixed_4f', 'MaxPool_5a_2x2', 'Mixed_5b', 'Mixed_5c']

scope: 可选变量作用域 variable_scope.

返回值:

字典,包含网络各层的激活值.

Raises:

ValueError: if final_endpoint is not set to one of the predefined values.

"""

end_points = {}

with tf.variable_scope(scope, 'InceptionV1', [inputs]):

with slim.arg_scope(

[slim.conv2d, slim.fully_connected],

weights_initializer=trunc_normal(0.01)):

with slim.arg_scope([slim.conv2d, slim.max_pool2d],

stride=1, padding='SAME'):

end_point = 'Conv2d_1a_7x7'

net = slim.conv2d(inputs, 64, [7, 7], stride=2, scope=end_point)

end_points[end_point] = net

if final_endpoint == end_point: return net, end_points

end_point = 'MaxPool_2a_3x3'

net = slim.max_pool2d(net, [3, 3], stride=2, scope=end_point)

end_points[end_point] = net

if final_endpoint == end_point: return net, end_points

end_point = 'Conv2d_2b_1x1'

net = slim.conv2d(net, 64, [1, 1], scope=end_point)

end_points[end_point] = net

if final_endpoint == end_point: return net, end_points

end_point = 'Conv2d_2c_3x3'

net = slim.conv2d(net, 192, [3, 3], scope=end_point)

end_points[end_point] = net

if final_endpoint == end_point: return net, end_points

end_point = 'MaxPool_3a_3x3'

net = slim.max_pool2d(net, [3, 3], stride=2, scope=end_point)

end_points[end_point] = net

if final_endpoint == end_point: return net, end_points

end_point = 'Mixed_3b'

with tf.variable_scope(end_point):

with tf.variable_scope('Branch_0'):

branch_0 = slim.conv2d(net, 64, [1, 1], scope='Conv2d_0a_1x1')

with tf.variable_scope('Branch_1'):

branch_1 = slim.conv2d(net, 96, [1, 1], scope='Conv2d_0a_1x1')

branch_1 = slim.conv2d(branch_1, 128, [3, 3], scope='Conv2d_0b_3x3')

with tf.variable_scope('Branch_2'):

branch_2 = slim.conv2d(net, 16, [1, 1], scope='Conv2d_0a_1x1')

branch_2 = slim.conv2d(branch_2, 32, [3, 3], scope='Conv2d_0b_3x3')

with tf.variable_scope('Branch_3'):

branch_3 = slim.max_pool2d(net, [3, 3], scope='MaxPool_0a_3x3')

branch_3 = slim.conv2d(branch_3, 32, [1, 1], scope='Conv2d_0b_1x1')

net = tf.concat(axis=3, values=[branch_0, branch_1, branch_2, branch_3])

end_points[end_point] = net

if final_endpoint == end_point: return net, end_points

end_point = 'Mixed_3c'

with tf.variable_scope(end_point):

with tf.variable_scope('Branch_0'):

branch_0 = slim.conv2d(net, 128, [1, 1], scope='Conv2d_0a_1x1')

with tf.variable_scope('Branch_1'):

branch_1 = slim.conv2d(net, 128, [1, 1], scope='Conv2d_0a_1x1')

branch_1 = slim.conv2d(branch_1, 192, [3, 3], scope='Conv2d_0b_3x3')

with tf.variable_scope('Branch_2'):

branch_2 = slim.conv2d(net, 32, [1, 1], scope='Conv2d_0a_1x1')

branch_2 = slim.conv2d(branch_2, 96, [3, 3], scope='Conv2d_0b_3x3')

with tf.variable_scope('Branch_3'):

branch_3 = slim.max_pool2d(net, [3, 3], scope='MaxPool_0a_3x3')

branch_3 = slim.conv2d(branch_3, 64, [1, 1], scope='Conv2d_0b_1x1')

net = tf.concat(axis=3, values=[branch_0, branch_1, branch_2, branch_3])

end_points[end_point] = net

if final_endpoint == end_point: return net, end_points

end_point = 'MaxPool_4a_3x3'

net = slim.max_pool2d(net, [3, 3], stride=2, scope=end_point)

end_points[end_point] = net

if final_endpoint == end_point: return net, end_points

end_point = 'Mixed_4b'

with tf.variable_scope(end_point):

with tf.variable_scope('Branch_0'):

branch_0 = slim.conv2d(net, 192, [1, 1], scope='Conv2d_0a_1x1')

with tf.variable_scope('Branch_1'):

branch_1 = slim.conv2d(net, 96, [1, 1], scope='Conv2d_0a_1x1')

branch_1 = slim.conv2d(branch_1, 208, [3, 3], scope='Conv2d_0b_3x3')

with tf.variable_scope('Branch_2'):

branch_2 = slim.conv2d(net, 16, [1, 1], scope='Conv2d_0a_1x1')

branch_2 = slim.conv2d(branch_2, 48, [3, 3], scope='Conv2d_0b_3x3')

with tf.variable_scope('Branch_3'):

branch_3 = slim.max_pool2d(net, [3, 3], scope='MaxPool_0a_3x3')

branch_3 = slim.conv2d(branch_3, 64, [1, 1], scope='Conv2d_0b_1x1')

net = tf.concat(axis=3, values=[branch_0, branch_1, branch_2, branch_3])

end_points[end_point] = net

if final_endpoint == end_point: return net, end_points

end_point = 'Mixed_4c'

with tf.variable_scope(end_point):

with tf.variable_scope('Branch_0'):

branch_0 = slim.conv2d(net, 160, [1, 1], scope='Conv2d_0a_1x1')

with tf.variable_scope('Branch_1'):

branch_1 = slim.conv2d(net, 112, [1, 1], scope='Conv2d_0a_1x1')

branch_1 = slim.conv2d(branch_1, 224, [3, 3], scope='Conv2d_0b_3x3')

with tf.variable_scope('Branch_2'):

branch_2 = slim.conv2d(net, 24, [1, 1], scope='Conv2d_0a_1x1')

branch_2 = slim.conv2d(branch_2, 64, [3, 3], scope='Conv2d_0b_3x3')

with tf.variable_scope('Branch_3'):

branch_3 = slim.max_pool2d(net, [3, 3], scope='MaxPool_0a_3x3')

branch_3 = slim.conv2d(branch_3, 64, [1, 1], scope='Conv2d_0b_1x1')

net = tf.concat(axis=3, values=[branch_0, branch_1, branch_2, branch_3])

end_points[end_point] = net

if final_endpoint == end_point: return net, end_points

end_point = 'Mixed_4d'

with tf.variable_scope(end_point):

with tf.variable_scope('Branch_0'):

branch_0 = slim.conv2d(net, 128, [1, 1], scope='Conv2d_0a_1x1')

with tf.variable_scope('Branch_1'):

branch_1 = slim.conv2d(net, 128, [1, 1], scope='Conv2d_0a_1x1')

branch_1 = slim.conv2d(branch_1, 256, [3, 3], scope='Conv2d_0b_3x3')

with tf.variable_scope('Branch_2'):

branch_2 = slim.conv2d(net, 24, [1, 1], scope='Conv2d_0a_1x1')

branch_2 = slim.conv2d(branch_2, 64, [3, 3], scope='Conv2d_0b_3x3')

with tf.variable_scope('Branch_3'):

branch_3 = slim.max_pool2d(net, [3, 3], scope='MaxPool_0a_3x3')

branch_3 = slim.conv2d(branch_3, 64, [1, 1], scope='Conv2d_0b_1x1')

net = tf.concat(axis=3, values=[branch_0, branch_1, branch_2, branch_3])

end_points[end_point] = net

if final_endpoint == end_point: return net, end_points

end_point = 'Mixed_4e'

with tf.variable_scope(end_point):

with tf.variable_scope('Branch_0'):

branch_0 = slim.conv2d(net, 112, [1, 1], scope='Conv2d_0a_1x1')

with tf.variable_scope('Branch_1'):

branch_1 = slim.conv2d(net, 144, [1, 1], scope='Conv2d_0a_1x1')

branch_1 = slim.conv2d(branch_1, 288, [3, 3], scope='Conv2d_0b_3x3')

with tf.variable_scope('Branch_2'):

branch_2 = slim.conv2d(net, 32, [1, 1], scope='Conv2d_0a_1x1')

branch_2 = slim.conv2d(branch_2, 64, [3, 3], scope='Conv2d_0b_3x3')

with tf.variable_scope('Branch_3'):

branch_3 = slim.max_pool2d(net, [3, 3], scope='MaxPool_0a_3x3')

branch_3 = slim.conv2d(branch_3, 64, [1, 1], scope='Conv2d_0b_1x1')

net = tf.concat(axis=3, values=[branch_0, branch_1, branch_2, branch_3])

end_points[end_point] = net

if final_endpoint == end_point: return net, end_points

end_point = 'Mixed_4f'

with tf.variable_scope(end_point):

with tf.variable_scope('Branch_0'):

branch_0 = slim.conv2d(net, 256, [1, 1], scope='Conv2d_0a_1x1')

with tf.variable_scope('Branch_1'):

branch_1 = slim.conv2d(net, 160, [1, 1], scope='Conv2d_0a_1x1')

branch_1 = slim.conv2d(branch_1, 320, [3, 3], scope='Conv2d_0b_3x3')

with tf.variable_scope('Branch_2'):

branch_2 = slim.conv2d(net, 32, [1, 1], scope='Conv2d_0a_1x1')

branch_2 = slim.conv2d(branch_2, 128, [3, 3], scope='Conv2d_0b_3x3')

with tf.variable_scope('Branch_3'):

branch_3 = slim.max_pool2d(net, [3, 3], scope='MaxPool_0a_3x3')

branch_3 = slim.conv2d(branch_3, 128, [1, 1], scope='Conv2d_0b_1x1')

net = tf.concat(axis=3, values=[branch_0, branch_1, branch_2, branch_3])

end_points[end_point] = net

if final_endpoint == end_point: return net, end_points

end_point = 'MaxPool_5a_2x2'

net = slim.max_pool2d(net, [2, 2], stride=2, scope=end_point)

end_points[end_point] = net

if final_endpoint == end_point: return net, end_points

end_point = 'Mixed_5b'

with tf.variable_scope(end_point):

with tf.variable_scope('Branch_0'):

branch_0 = slim.conv2d(net, 256, [1, 1], scope='Conv2d_0a_1x1')

with tf.variable_scope('Branch_1'):

branch_1 = slim.conv2d(net, 160, [1, 1], scope='Conv2d_0a_1x1')

branch_1 = slim.conv2d(branch_1, 320, [3, 3], scope='Conv2d_0b_3x3')

with tf.variable_scope('Branch_2'):

branch_2 = slim.conv2d(net, 32, [1, 1], scope='Conv2d_0a_1x1')

branch_2 = slim.conv2d(branch_2, 128, [3, 3], scope='Conv2d_0a_3x3')

with tf.variable_scope('Branch_3'):

branch_3 = slim.max_pool2d(net, [3, 3], scope='MaxPool_0a_3x3')

branch_3 = slim.conv2d(branch_3, 128, [1, 1], scope='Conv2d_0b_1x1')

net = tf.concat(axis=3, values=[branch_0, branch_1, branch_2, branch_3])

end_points[end_point] = net

if final_endpoint == end_point: return net, end_points

end_point = 'Mixed_5c'

with tf.variable_scope(end_point):

with tf.variable_scope('Branch_0'):

branch_0 = slim.conv2d(net, 384, [1, 1], scope='Conv2d_0a_1x1')

with tf.variable_scope('Branch_1'):

branch_1 = slim.conv2d(net, 192, [1, 1], scope='Conv2d_0a_1x1')

branch_1 = slim.conv2d(branch_1, 384, [3, 3], scope='Conv2d_0b_3x3')

with tf.variable_scope('Branch_2'):

branch_2 = slim.conv2d(net, 48, [1, 1], scope='Conv2d_0a_1x1')

branch_2 = slim.conv2d(branch_2, 128, [3, 3], scope='Conv2d_0b_3x3')

with tf.variable_scope('Branch_3'):

branch_3 = slim.max_pool2d(net, [3, 3], scope='MaxPool_0a_3x3')

branch_3 = slim.conv2d(branch_3, 128, [1, 1], scope='Conv2d_0b_1x1')

net = tf.concat(axis=3, values=[branch_0, branch_1, branch_2, branch_3])

end_points[end_point] = net

if final_endpoint == end_point: return net, end_points

raise ValueError('Unknown final endpoint %s' % final_endpoint)

def inception_v1(inputs,

num_classes=1000,

is_training=True,

dropout_keep_prob=0.8,

prediction_fn=slim.softmax,

spatial_squeeze=True,

reuse=None,

scope='InceptionV1',

global_pool=False):

"""

Inception V1 结构的定义.

参数:

inputs: Tensor,尺寸为 [batch_size, height, width, channels].

num_classes: 待预测的类别数. 如果 num_classes=0或None,则忽略 logits 层;返回 logits 层的输入特征(dropout 层前的网络层).

is_training: 是否是训练阶段.

dropout_keep_prob: 保留的激活值的比例.

prediction_fn: 计算 logits 预测值输出的函数,如softmax.

spatial_squeeze: 如果是 True, logits 的 shape 是 [B, C];

如果是 false,则 logits 的 shape 是 [B, 1, 1, C];

其中,B 是 batch_size,C 是类别数.

reuse: 是否重用网络及网络的变量值.

如果需要重用,则必须给定重用的 'scope'.

scope: 可选变量作用域 variable_scope.

global_pool: 可选 boolean 值,选择是否在 logits 网络层前使用 avgpooling 层.

默认值是 fasle,则采用固定窗口的 pooling 层,将 inputs 降低到 1x1.

inputs 越大,则 outputs 越大.

如果值是 true, 则任何 inputs 尺寸都 pooled 到 1x1.

返回值:

net: Tensor,如果 num_classes 为非零值,则返回 logits(pre-softmax activations).

如果 num_classes 是 0 或 None,则返回 logits 网络层的 non-dropped-out 输入.

end_points: 字典,包含网络各层的激活值.

"""

# Final pooling and prediction

with tf.variable_scope(scope, 'InceptionV1', [inputs], reuse=reuse) as scope:

with slim.arg_scope([slim.batch_norm, slim.dropout],

is_training=is_training):

net, end_points = inception_v1_base(inputs, scope=scope)

with tf.variable_scope('Logits'):

if global_pool:

# Global average pooling.

net = tf.reduce_mean(net, [1, 2], keep_dims=True, name='global_pool')

end_points['global_pool'] = net

else:

# Pooling with a fixed kernel size.

net = slim.avg_pool2d(net, [7, 7], stride=1, scope='AvgPool_0a_7x7')

end_points['AvgPool_0a_7x7'] = net

if not num_classes:

return net, end_points

net = slim.dropout(net, dropout_keep_prob, scope='Dropout_0b')

logits = slim.conv2d(net, num_classes, [1, 1], activation_fn=None,

normalizer_fn=None, scope='Conv2d_0c_1x1')

if spatial_squeeze:

logits = tf.squeeze(logits, [1, 2], name='SpatialSqueeze')

end_points['Logits'] = logits

end_points['Predictions'] = prediction_fn(logits, scope='Predictions')

return logits, end_points

inception_v1.default_image_size = 224

inception_v1_arg_scope = inception_utils.inception_arg_scope