tensorboardX 用于 Pytorch (Chainer, MXNet, Numpy 等) 的可视化库.

类似于 TensorFlow 的 tensorboard 模块.

tensorboard 采用简单的函数调用来写入 TensorBoard 事件.

- 支持

scalar,image,figure,histogram,audio,text,graph,onnx_graph,embedding,pr_curve和videosummaries. demo_graph.py的要求:tensorboardX>=1.2,pytorch>=0.4.

安装:

sudo pip install tensorboardX

# 或

sudo pip install git+https://github.com/lanpa/tensorboardX1. TensorBoardX 使用 Demo

demo.py

# demo.py

import torch

import torchvision.utils as vutils

import numpy as np

import torchvision.models as models

from torchvision import datasets

from tensorboardX import SummaryWriter

resnet18 = models.resnet18(False)

writer = SummaryWriter()

sample_rate = 44100

freqs = [262, 294, 330, 349, 392, 440, 440, 440, 440, 440, 440]

for n_iter in range(100):

dummy_s1 = torch.rand(1)

dummy_s2 = torch.rand(1)

# data grouping by `slash`

writer.add_scalar('data/scalar1', dummy_s1[0], n_iter)

writer.add_scalar('data/scalar2', dummy_s2[0], n_iter)

writer.add_scalars('data/scalar_group', {'xsinx': n_iter * np.sin(n_iter),

'xcosx': n_iter * np.cos(n_iter),

'arctanx': np.arctan(n_iter)}, n_iter)

dummy_img = torch.rand(32, 3, 64, 64) # output from network

if n_iter % 10 == 0:

x = vutils.make_grid(dummy_img, normalize=True, scale_each=True)

writer.add_image('Image', x, n_iter)

dummy_audio = torch.zeros(sample_rate * 2)

for i in range(x.size(0)):

# amplitude of sound should in [-1, 1]

dummy_audio[i] = np.cos(freqs[n_iter // 10] * np.pi * float(i) / float(sample_rate))

writer.add_audio('myAudio', dummy_audio, n_iter, sample_rate=sample_rate)

writer.add_text('Text', 'text logged at step:' + str(n_iter), n_iter)

for name, param in resnet18.named_parameters():

writer.add_histogram(name, param.clone().cpu().data.numpy(), n_iter)

# needs tensorboard 0.4RC or later

writer.add_pr_curve('xoxo', np.random.randint(2, size=100), np.random.rand(100), n_iter)

dataset = datasets.MNIST('mnist', train=False, download=True)

images = dataset.test_data[:100].float()

label = dataset.test_labels[:100]

features = images.view(100, 784)

writer.add_embedding(features, metadata=label, label_img=images.unsqueeze(1))

# export scalar data to JSON for external processing

writer.export_scalars_to_json("./all_scalars.json")

writer.close()运行以上 demo.py 代码:

python demo.py然后,即可采用 TensorBoard 可视化(需要先安装过 TensorFlow):

tensorboard --logdir ./runs在 demo.py代码里主要给出了以下几个方面的信息:

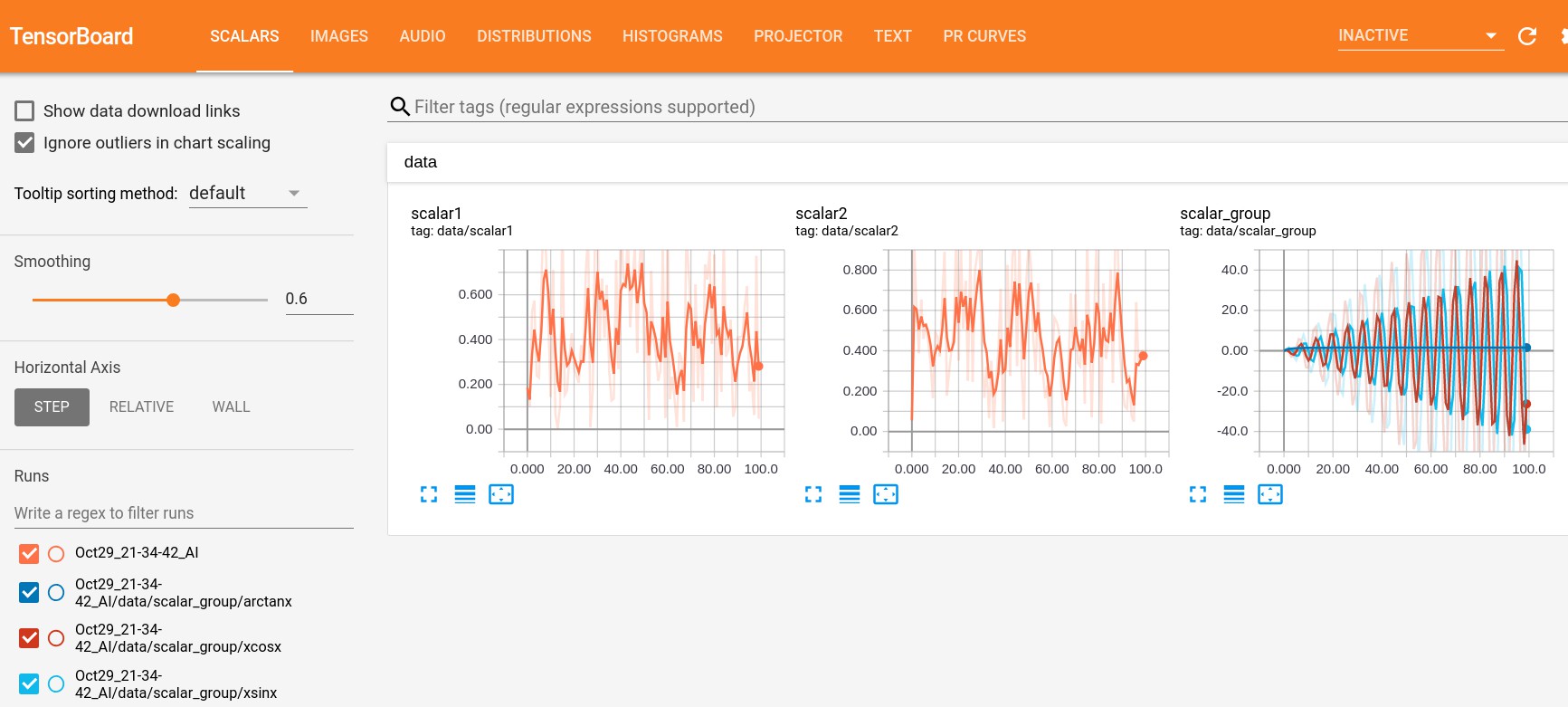

SCALARS:data/scalar1,data/scalar2 和 data/scalar_group

writer.add_scalar('data/scalar1', dummy_s1[0], n_iter) writer.add_scalar('data/scalar2', dummy_s2[0], n_iter) writer.add_scalars('data/scalar_group', {'xsinx': n_iter * np.sin(n_iter), 'xcosx': n_iter * np.cos(n_iter), 'arctanx': np.arctan(n_iter)}, n_iter)

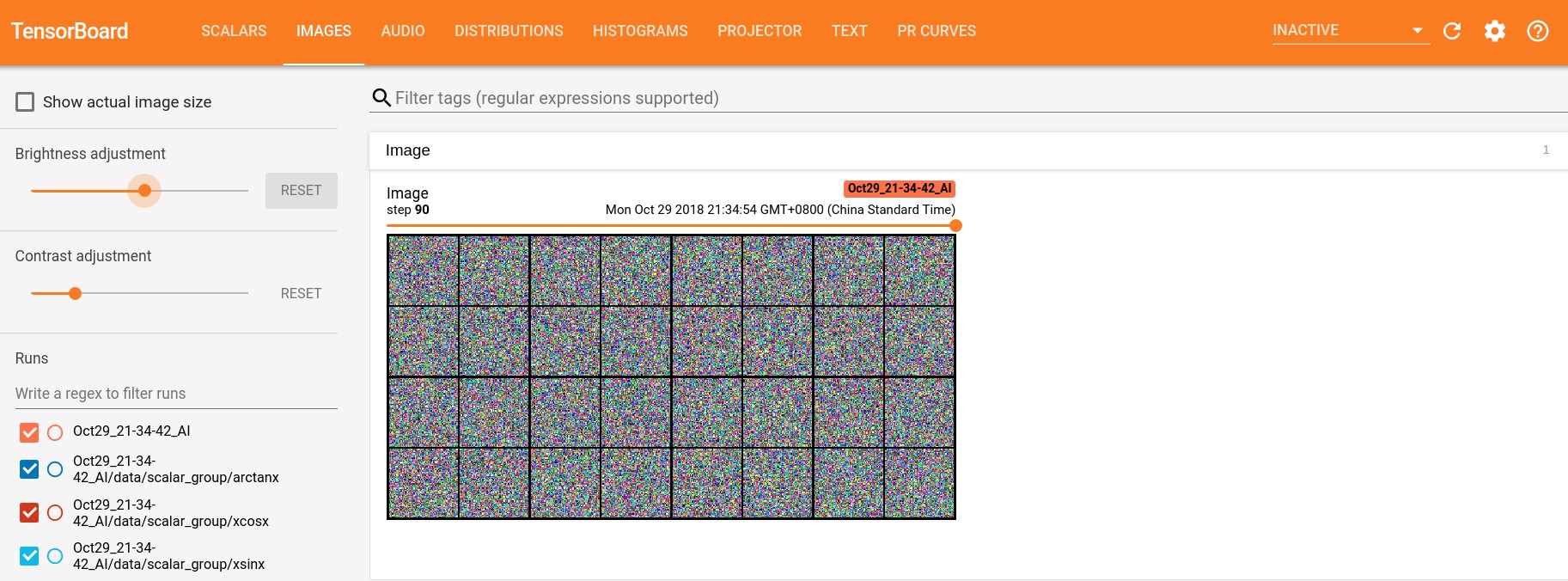

IMAGES:

writer.add_image('Image', x, n_iter)

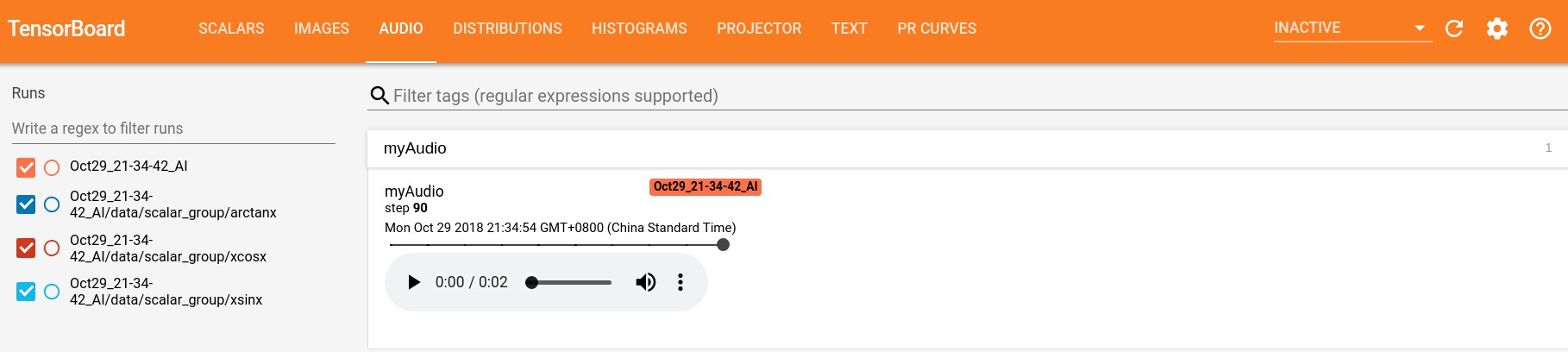

AUDIO:

writer.add_audio('myAudio', dummy_audio, n_iter, sample_rate=sample_rate)

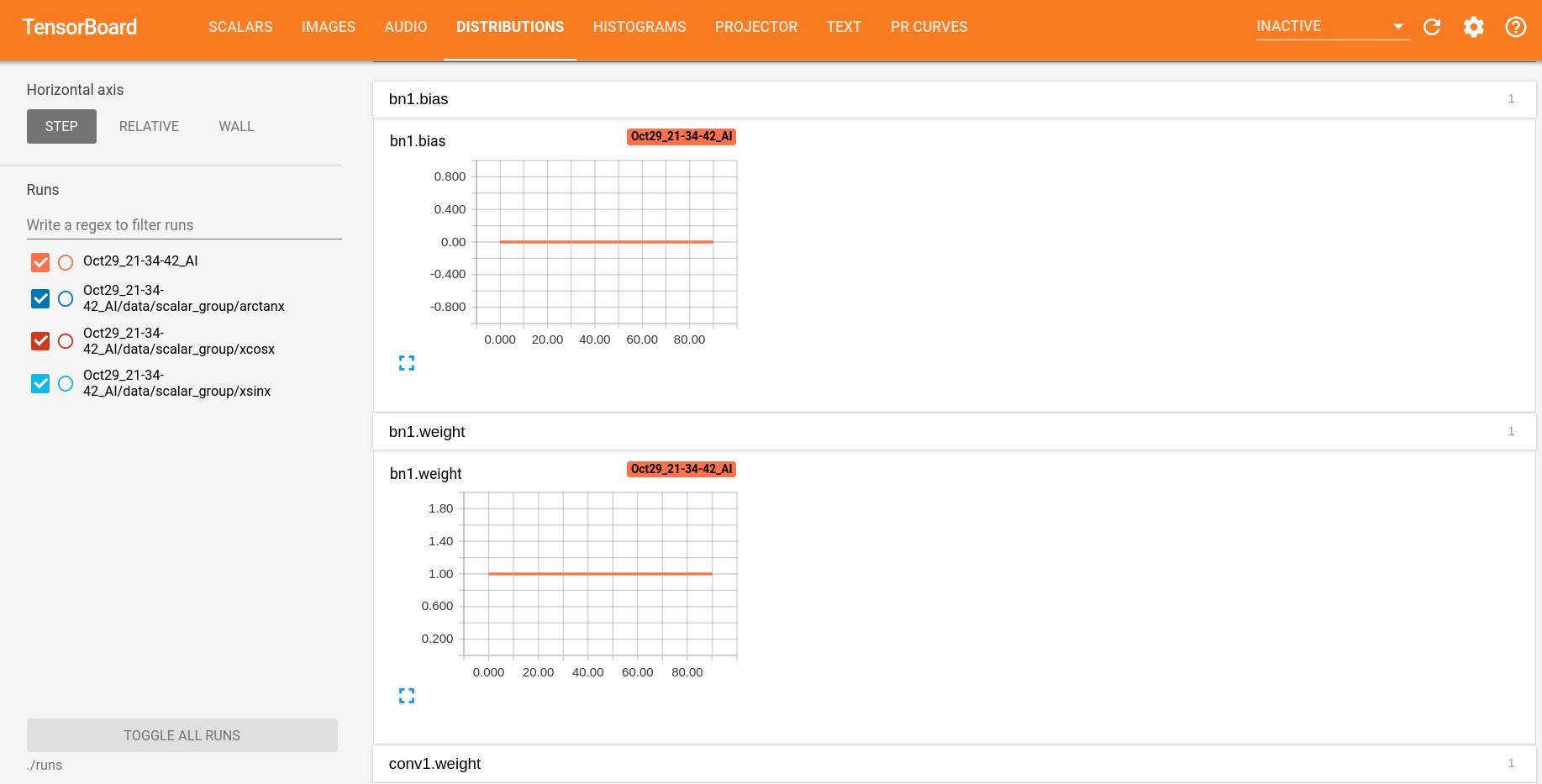

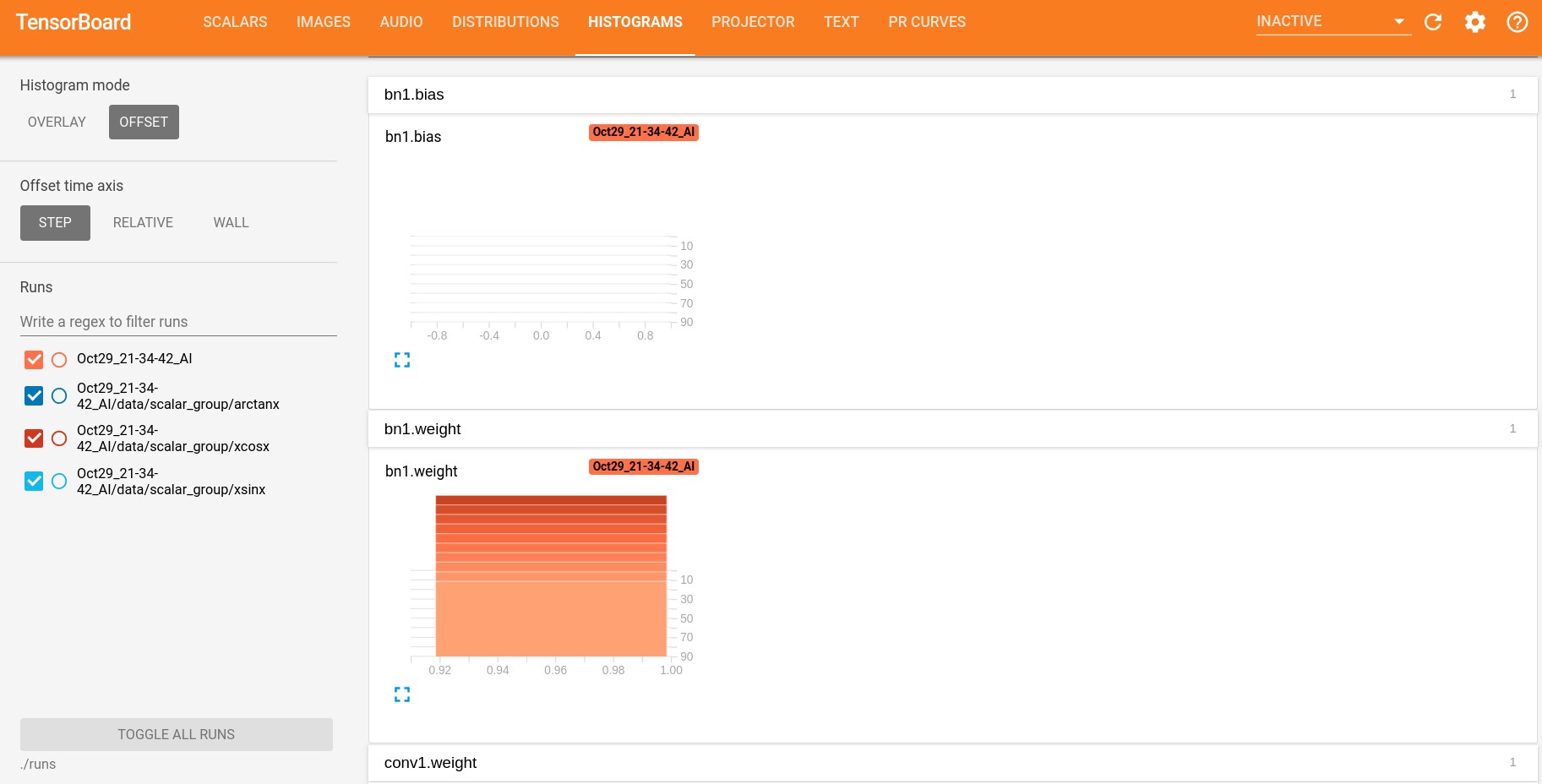

DISTRIBUTIONS和 HISTOGRAMS:

for name, param in resnet18.named_parameters(): writer.add_histogram(name, param.clone().cpu().data.numpy(), n_iter)

TEXT:

writer.add_text('Text', 'text logged at step:' + str(n_iter), n_iter)

PR CURVES:

# needs tensorboard 0.4RC or later writer.add_pr_curve('xoxo', np.random.randint(2, size=100), np.random.rand(100), n_iter)

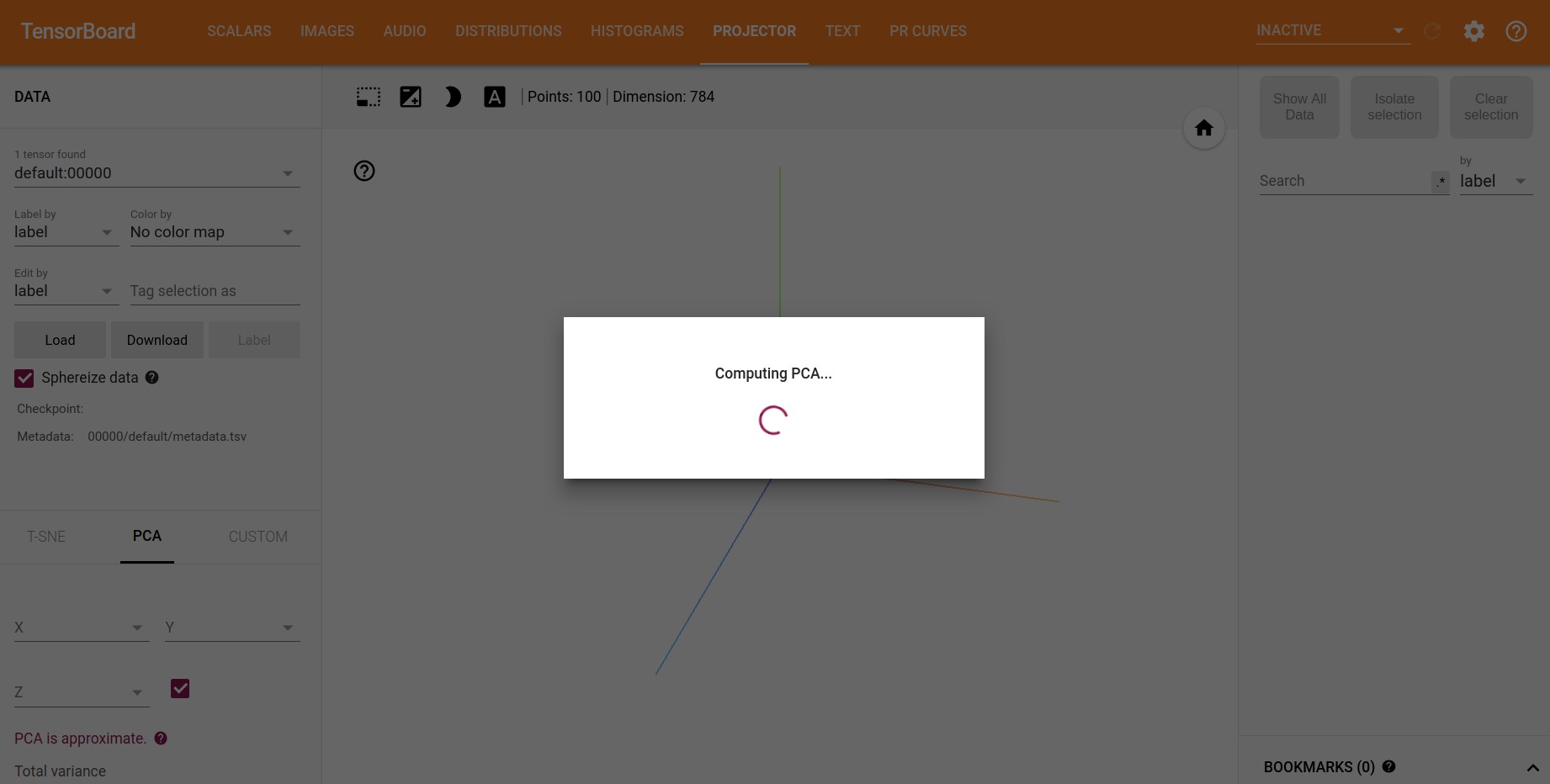

PROJECTOR:

dataset = datasets.MNIST('mnist', train=False, download=False) images = dataset.test_data[:100].float() label = dataset.test_labels[:100] features = images.view(100, 784) writer.add_embedding(features, metadata=label, label_img=images.unsqueeze(1))

(一直在计算 PCA 。。。)

2. TensorBoardX - Graph 可视化

demo_graph.py

import torch

import torch.nn as nn

import torch.nn.functional as F

import torchvision

from torch.autograd import Variable

from tensorboardX import SummaryWriter

class Net1(nn.Module):

def __init__(self):

super(Net1, self).__init__()

self.conv1 = nn.Conv2d(1, 10, kernel_size=5)

self.conv2 = nn.Conv2d(10, 20, kernel_size=5)

self.conv2_drop = nn.Dropout2d()

self.fc1 = nn.Linear(320, 50)

self.fc2 = nn.Linear(50, 10)

self.bn = nn.BatchNorm2d(20)

def forward(self, x):

x = F.max_pool2d(self.conv1(x), 2)

x = F.relu(x) + F.relu(-x)

x = F.relu(F.max_pool2d(self.conv2_drop(self.conv2(x)), 2))

x = self.bn(x)

x = x.view(-1, 320)

x = F.relu(self.fc1(x))

x = F.dropout(x, training=self.training)

x = self.fc2(x)

x = F.softmax(x, dim=1)

return x

class Net2(nn.Module):

def __init__(self):

super(Net2, self).__init__()

self.conv1 = nn.Conv2d(1, 10, kernel_size=5)

self.conv2 = nn.Conv2d(10, 20, kernel_size=5)

self.conv2_drop = nn.Dropout2d()

self.fc1 = nn.Linear(320, 50)

self.fc2 = nn.Linear(50, 10)

def forward(self, x):

x = F.relu(F.max_pool2d(self.conv1(x), 2))

x = F.relu(F.max_pool2d(self.conv2_drop(self.conv2(x)), 2))

x = x.view(-1, 320)

x = F.relu(self.fc1(x))

x = F.dropout(x, training=self.training)

x = self.fc2(x)

x = F.log_softmax(x, dim=1)

return x

dummy_input = Variable(torch.rand(13, 1, 28, 28))

model = Net1()

with SummaryWriter(comment='Net1') as w:

w.add_graph(model, (dummy_input, ))

model = Net2()

with SummaryWriter(comment='Net2') as w:

w.add_graph(model, (dummy_input, ))

dummy_input = torch.Tensor(1, 3, 224, 224)

with SummaryWriter(comment='alexnet') as w:

model = torchvision.models.alexnet()

w.add_graph(model, (dummy_input, ))

with SummaryWriter(comment='vgg19') as w:

model = torchvision.models.vgg19()

w.add_graph(model, (dummy_input, ))

with SummaryWriter(comment='densenet121') as w:

model = torchvision.models.densenet121()

w.add_graph(model, (dummy_input, ))

with SummaryWriter(comment='resnet18') as w:

model = torchvision.models.resnet18()

w.add_graph(model, (dummy_input, ))

class SimpleModel(nn.Module):

def __init__(self):

super(SimpleModel, self).__init__()

def forward(self, x):

return x * 2

model = SimpleModel()

dummy_input = (torch.zeros(1, 2, 3),)

with SummaryWriter(comment='constantModel') as w:

w.add_graph(model, dummy_input)

def conv3x3(in_planes, out_planes, stride=1):

"""3x3 convolution with padding"""

return nn.Conv2d(in_planes, out_planes, kernel_size=3, stride=stride,

padding=1, bias=False)

class BasicBlock(nn.Module):

expansion = 1

def __init__(self, inplanes, planes, stride=1, downsample=None):

super(BasicBlock, self).__init__()

self.conv1 = conv3x3(inplanes, planes, stride)

self.bn1 = nn.BatchNorm2d(planes)

# self.relu = nn.ReLU(inplace=True)

self.conv2 = conv3x3(planes, planes)

self.bn2 = nn.BatchNorm2d(planes)

self.stride = stride

def forward(self, x):

residual = x

out = self.conv1(x)

out = self.bn1(out)

out = F.relu(out)

out = self.conv2(out)

out = self.bn2(out)

out += residual

out = F.relu(out)

return out

dummy_input = torch.rand(1, 3, 224, 224)

with SummaryWriter(comment='basicblock') as w:

model = BasicBlock(3, 3)

w.add_graph(model, (dummy_input, )) # , verbose=True)

class RNN(nn.Module):

def __init__(self, input_size, hidden_size, output_size):

super(RNN, self).__init__()

self.hidden_size = hidden_size

self.i2h = nn.Linear(

n_categories +

input_size +

hidden_size,

hidden_size)

self.i2o = nn.Linear(

n_categories +

input_size +

hidden_size,

output_size)

self.o2o = nn.Linear(hidden_size + output_size, output_size)

self.dropout = nn.Dropout(0.1)

self.softmax = nn.LogSoftmax(dim=1)

def forward(self, category, input, hidden):

input_combined = torch.cat((category, input, hidden), 1)

hidden = self.i2h(input_combined)

output = self.i2o(input_combined)

output_combined = torch.cat((hidden, output), 1)

output = self.o2o(output_combined)

output = self.dropout(output)

output = self.softmax(output)

return output, hidden

def initHidden(self):

return torch.zeros(1, self.hidden_size)

n_letters = 100

n_hidden = 128

n_categories = 10

rnn = RNN(n_letters, n_hidden, n_categories)

cat = torch.Tensor(1, n_categories)

dummy_input = torch.Tensor(1, n_letters)

hidden = torch.Tensor(1, n_hidden)

out, hidden = rnn(cat, dummy_input, hidden)

with SummaryWriter(comment='RNN') as w:

w.add_graph(rnn, (cat, dummy_input, hidden), verbose=False)

import pytest

print('expect error here:')

with pytest.raises(Exception) as e_info:

dummy_input = torch.rand(1, 1, 224, 224)

with SummaryWriter(comment='basicblock_error') as w:

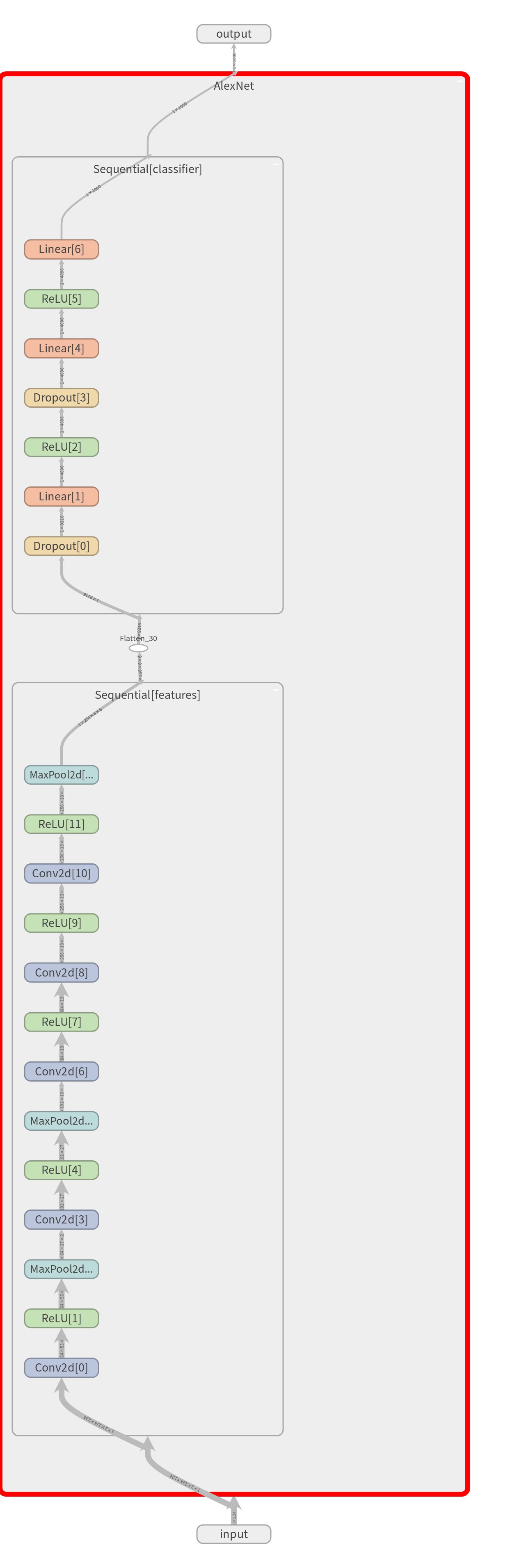

w.add_graph(model, (dummy_input, )) # error这里主要给出了自定义网络 Net1, 自定义网络 Net2, AlexNet, VGG19, DenseNet121, ResNet18, constantModel, basicblock 和 RNN 几个网络 graph 的例示.

运行 tensorboard --logdir=./runs/ 可得到如下可视化,以 AlexNet 为例:

双击 Main Graph 中的 AlexNet 可以查看网络 Graph 的具体网络层,下载 PNG,如:

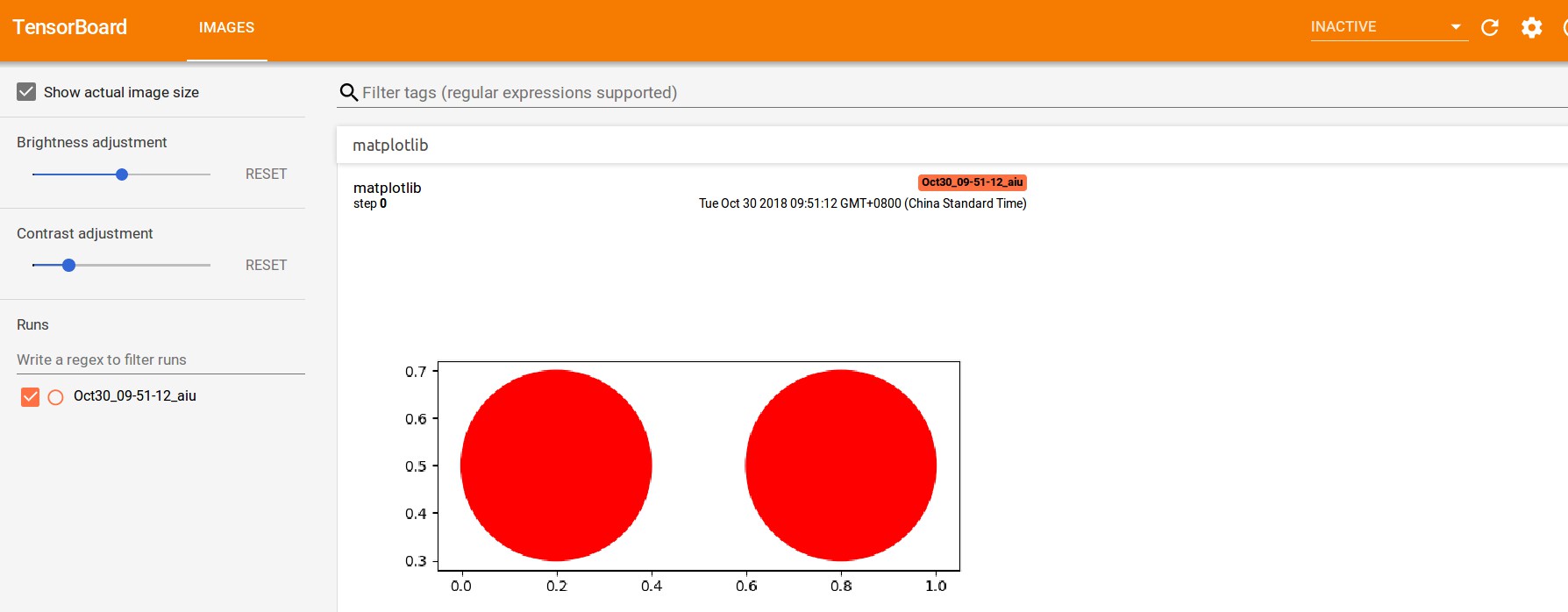

3. TensorBoardX - matplotlib 可视化

[demo_matplotlib.py]

import matplotlib.pyplot as plt

plt.switch_backend('agg')

fig = plt.figure()

c1 = plt.Circle((0.2, 0.5), 0.2, color='r')

c2 = plt.Circle((0.8, 0.5), 0.2, color='r')

ax = plt.gca()

ax.add_patch(c1)

ax.add_patch(c2)

plt.axis('scaled')

from tensorboardX import SummaryWriter

writer = SummaryWriter()

writer.add_figure('matplotlib', fig)

writer.close()

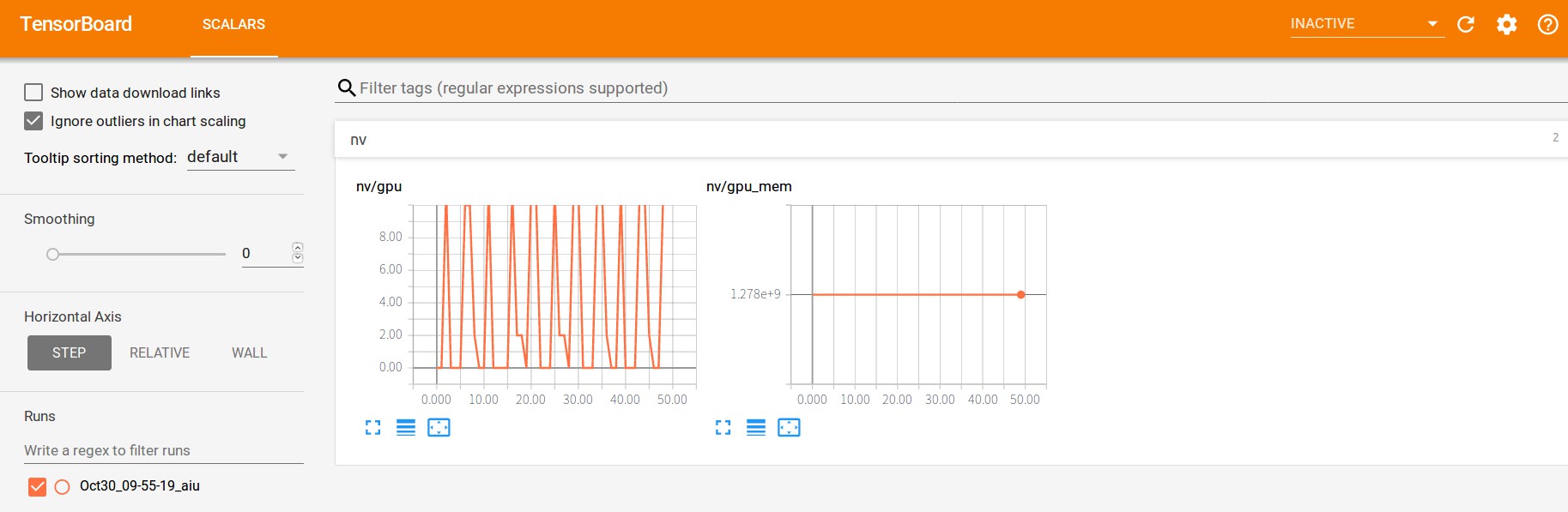

4. TensorBoardX - nvidia-smi 可视化

demo_nvidia_smi.py

"""

write gpu and (gpu) memory usage of nvidia cards as scalar

"""

from tensorboardX import SummaryWriter

import time

import torch

try:

import nvidia_smi

nvidia_smi.nvmlInit()

handle = nvidia_smi.nvmlDeviceGetHandleByIndex(0) # gpu0

except ImportError:

print('This demo needs nvidia-ml-py or nvidia-ml-py3')

exit()

with SummaryWriter() as writer:

x = []

for n_iter in range(50):

x.append(torch.Tensor(1000, 1000).cuda())

res = nvidia_smi.nvmlDeviceGetUtilizationRates(handle)

writer.add_scalar('nv/gpu', res.gpu, n_iter)

res = nvidia_smi.nvmlDeviceGetMemoryInfo(handle)

writer.add_scalar('nv/gpu_mem', res.used, n_iter)

time.sleep(0.1)

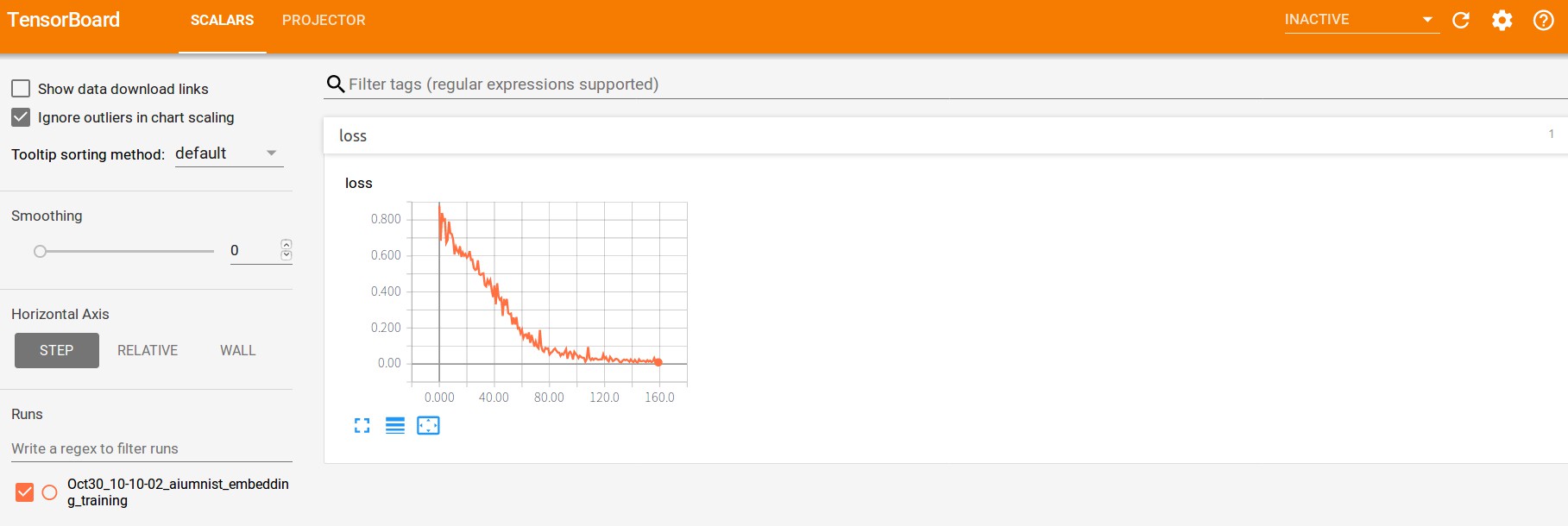

5. TensorBoardX - embedding 可视化

demo_embedding.py

import torch

import torch.nn as nn

import torch.nn.functional as F

import os

from torch.autograd.variable import Variable

from tensorboardX import SummaryWriter

from torch.utils.data import TensorDataset, DataLoader

# EMBEDDING VISUALIZATION FOR A TWO-CLASSES PROBLEM

# 二分类问题的可视化

# just a bunch of layers

class M(nn.Module):

def __init__(self):

super(M, self).__init__()

self.cn1 = nn.Conv2d(in_channels=1, out_channels=64, kernel_size=3)

self.cn2 = nn.Conv2d(in_channels=64, out_channels=32, kernel_size=3)

self.fc1 = nn.Linear(in_features=128, out_features=2)

def forward(self, i):

i = self.cn1(i)

i = F.relu(i)

i = F.max_pool2d(i, 2)

i = self.cn2(i)

i = F.relu(i)

i = F.max_pool2d(i, 2)

i = i.view(len(i), -1)

i = self.fc1(i)

i = F.log_softmax(i, dim=1)

return i

# 随机生成部分数据,加噪声

def get_data(value, shape):

data = torch.ones(shape) * value

# add some noise

data += torch.randn(shape)**2

return data

# dataset

# cat some data with different values

data = torch.cat((get_data(0, (100, 1, 14, 14)),

get_data(0.5, (100, 1, 14, 14))), 0)

# labels

labels = torch.cat((torch.zeros(100), torch.ones(100)), 0)

# generator

gen = DataLoader(TensorDataset(data, labels), batch_size=25, shuffle=True)

# network

m = M()

#loss and optim

loss = nn.NLLLoss()

optimizer = torch.optim.Adam(params=m.parameters())

# settings for train and log

num_epochs = 20

embedding_log = 5

writer = SummaryWriter(comment='mnist_embedding_training')

# TRAIN

for epoch in range(num_epochs):

for j, sample in enumerate(gen):

n_iter = (epoch * len(gen)) + j

# reset grad

m.zero_grad()

optimizer.zero_grad()

# get batch data

data_batch = Variable(sample[0], requires_grad=True).float()

label_batch = Variable(sample[1], requires_grad=False).long()

# FORWARD

out = m(data_batch)

loss_value = loss(out, label_batch)

# BACKWARD

loss_value.backward()

optimizer.step()

# LOGGING

writer.add_scalar('loss', loss_value.data.item(), n_iter)

if j % embedding_log == 0:

print("loss_value:{}".format(loss_value.data.item()))

# we need 3 dimension for tensor to visualize it!

out = torch.cat((out.data, torch.ones(len(out), 1)), 1)

writer.add_embedding(out,

metadata=label_batch.data,

label_img=data_batch.data,

global_step=n_iter)

writer.close()

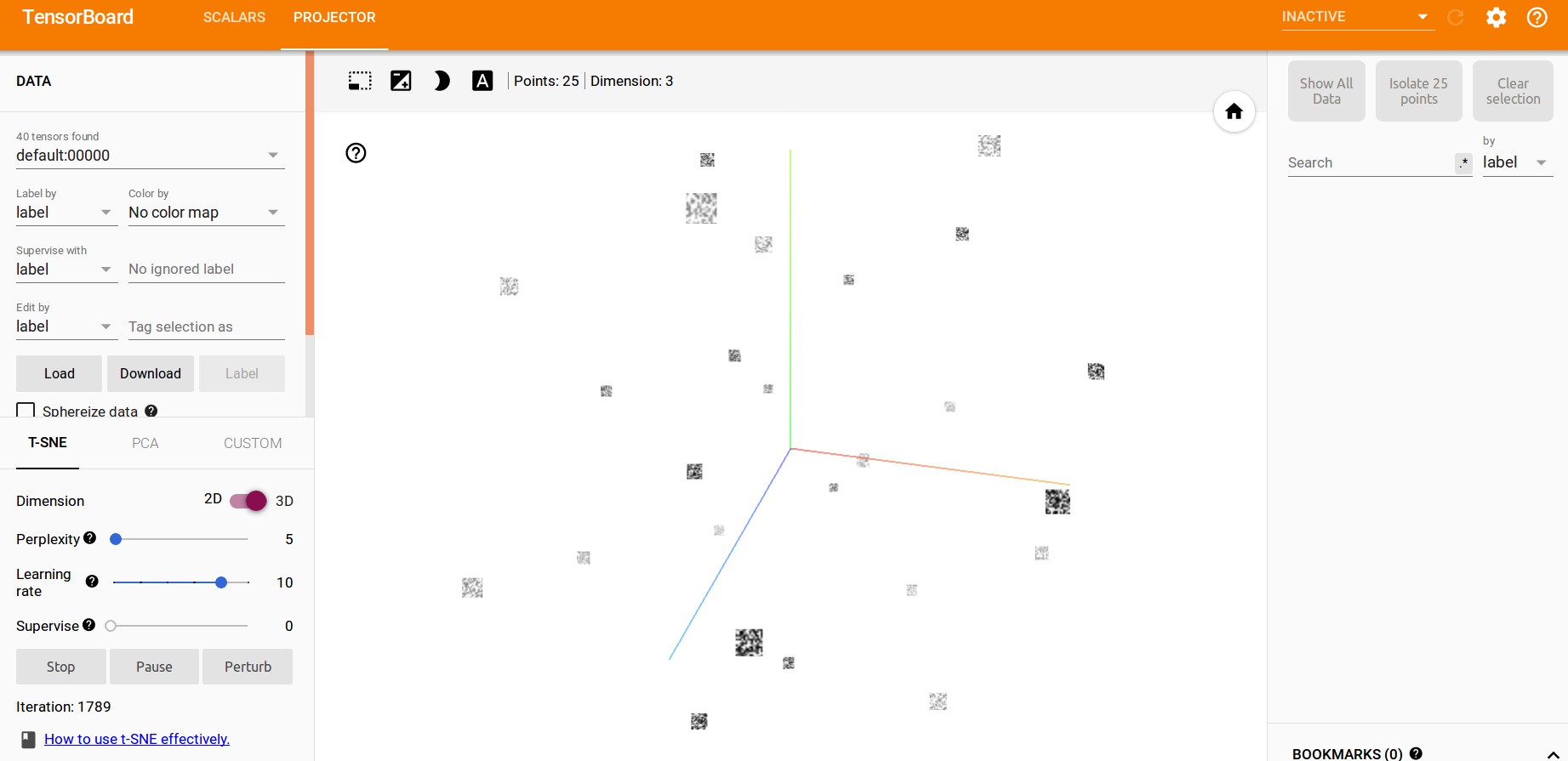

t-SNE:

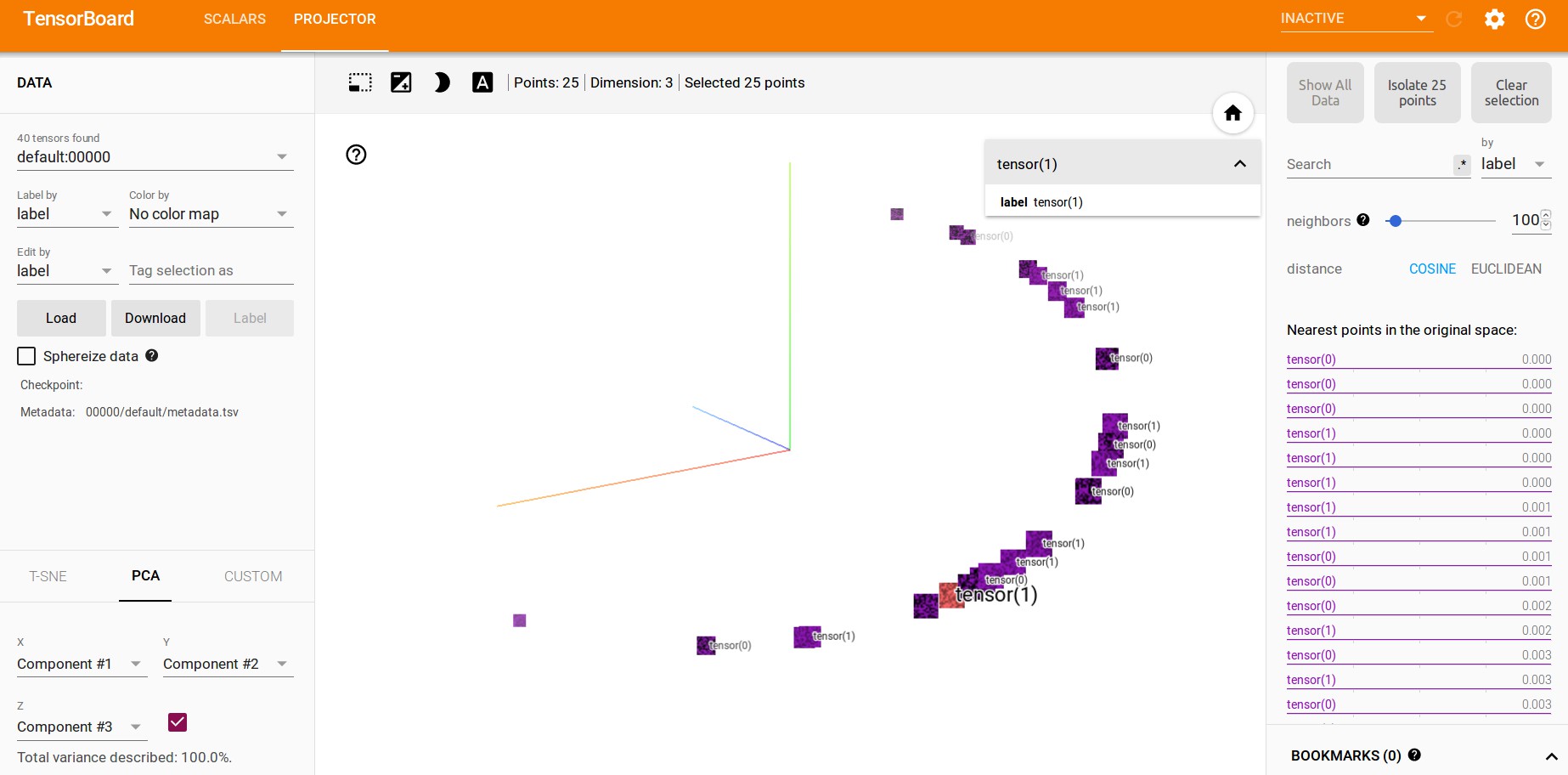

PCA:

6. TensorBoardX - multiple-embedding 可视化

demo_multiple_embedding.py

import math

import numpy as np

from tensorboardX import SummaryWriter

def main():

degrees = np.linspace(0, 3600 * math.pi / 180.0, 3600)

degrees = degrees.reshape(3600, 1)

labels = ["%d" % (i) for i in range(0, 3600)]

with SummaryWriter() as writer:

# Maybe make a bunch of data that's always shifted in some

# way, and that will be hard for PCA to turn into a sphere?

for epoch in range(0, 16):

shift = epoch * 2 * math.pi / 16.0

mat = np.concatenate([

np.sin(shift + degrees * 2 * math.pi / 180.0),

np.sin(shift + degrees * 3 * math.pi / 180.0),

np.sin(shift + degrees * 5 * math.pi / 180.0),

np.sin(shift + degrees * 7 * math.pi / 180.0),

np.sin(shift + degrees * 11 * math.pi / 180.0)

], axis=1)

writer.add_embedding(

mat=mat,

metadata=labels,

tag="sin",

global_step=epoch)

mat = np.concatenate([

np.cos(shift + degrees * 2 * math.pi / 180.0),

np.cos(shift + degrees * 3 * math.pi / 180.0),

np.cos(shift + degrees * 5 * math.pi / 180.0),

np.cos(shift + degrees * 7 * math.pi / 180.0),

np.cos(shift + degrees * 11 * math.pi / 180.0)

], axis=1)

writer.add_embedding(

mat=mat,

metadata=labels,

tag="cos",

global_step=epoch)

mat = np.concatenate([

np.tan(shift + degrees * 2 * math.pi / 180.0),

np.tan(shift + degrees * 3 * math.pi / 180.0),

np.tan(shift + degrees * 5 * math.pi / 180.0),

np.tan(shift + degrees * 7 * math.pi / 180.0),

np.tan(shift + degrees * 11 * math.pi / 180.0)

], axis=1)

writer.add_embedding(

mat=mat,

metadata=labels,

tag="tan",

global_step=epoch)

if __name__ == "__main__":

main()