Github - justinpinkney/stylegan2

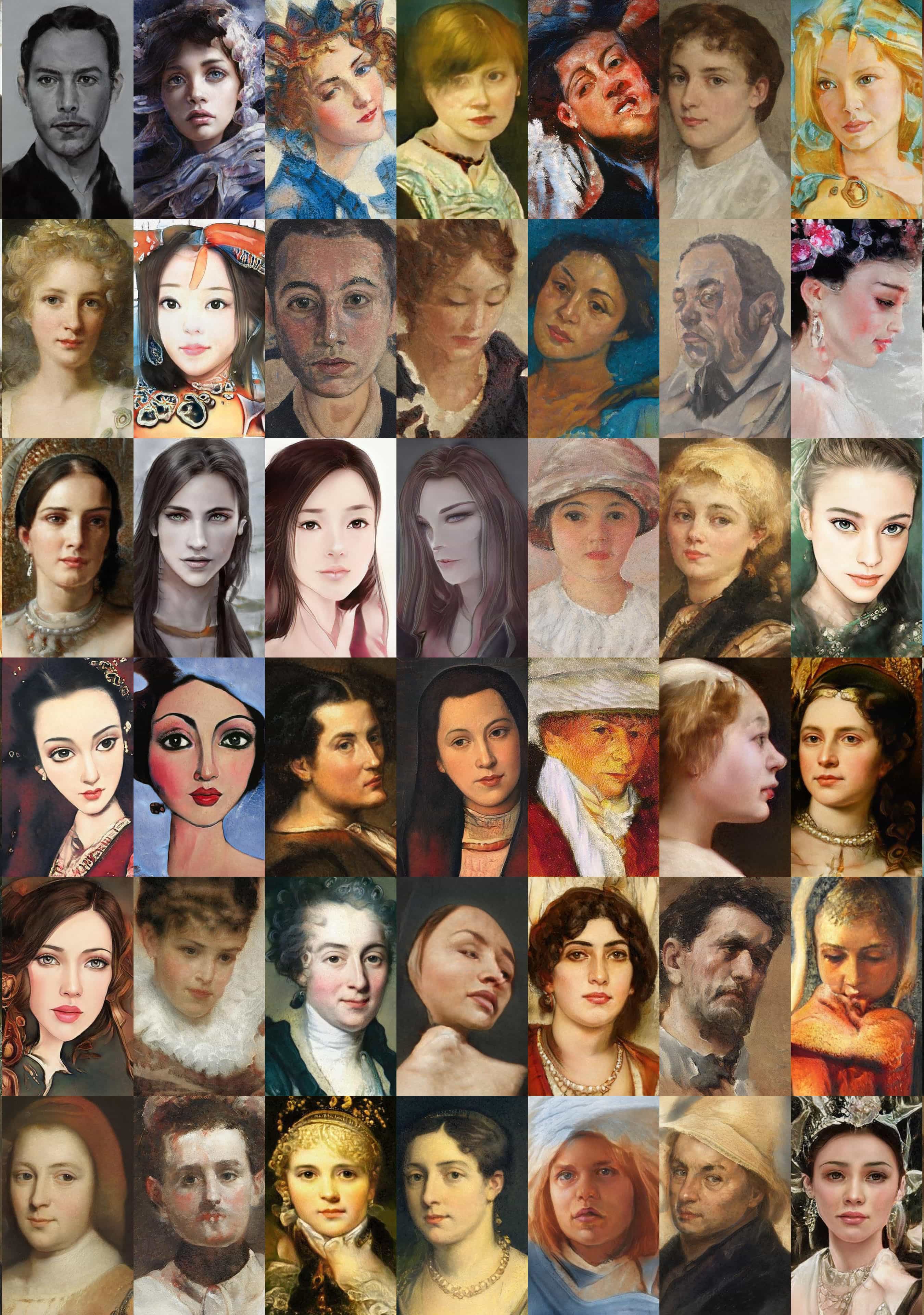

基于 StyleGAN2 的人脸艺术化,项目效果如:

这里主要记录下测试过程.

1. 配置

下载项目:

git clone https://github.com/justinpinkney/stylegan2

cd stylegan2

nvcc test_nvcc.cu -o test_nvcc -run

#tensorflow 1.x下载权重文件:

#ffhq-cartoon-blended-64.pkl

blended_url = "https://drive.google.com/uc?id=1H73TfV5gQ9ot7slSed_l-lim9X7pMRiU"

#stylegan2-ffhq-config-f.pkl

ffhq_url = "http://d36zk2xti64re0.cloudfront.net/stylegan2/networks/stylegan2-ffhq-config-f.pkl"

#如:

_, _, Gs_blended = pretrained_networks.load_networks(blended_url)

_, _, Gs = pretrained_networks.load_networks(ffhq_url)下载测试图片,假设放置路径为 raw/. 建议测试图像分辨率高一些.

wget https://upload.wikimedia.org/wikipedia/commons/6/6d/Shinz%C5%8D_Abe_Official.jpg -O raw/example.jpg2. 测试

对于待测试图片,测试流程主要为:

- 人脸检测和对齐

- 人脸投影(人脸编码,如,计算人脸的 latent code)

- 人脸卡通化(如,将 latent code 应用于卡通模型)

2.1. 人脸对齐

Dlib库 - 人脸关键点检测与对齐实现 - AIUAI

人脸对齐实现:https://github.com/justinpinkney/stylegan2/blob/master/align_images.py

运行如下,假设对齐后的路径为 aligned/:

python align_images.py raw/ aligned/对齐后效果如图:

2.2. 人脸投影

运行,假设生成路径为 generated/,

python project_images.py --num-steps 500 aligned/ generated/2.2.1. projector

projector.py

import numpy as np

import tensorflow as tf

import dnnlib

import dnnlib.tflib as tflib

from training import misc

#----------------------------------------------------------------------------

class Projector:

def __init__(self,

vgg16_pkl = 'https://drive.google.com/uc?id=1N2-m9qszOeVC9Tq77WxsLnuWwOedQiD2',

num_steps = 1000,

initial_learning_rate = 0.1,

initial_noise_factor = 0.05,

verbose = False

):

self.vgg16_pkl = vgg16_pkl

self.num_steps = num_steps

self.dlatent_avg_samples = 10000

self.initial_learning_rate = initial_learning_rate

self.initial_noise_factor = initial_noise_factor

self.lr_rampdown_length = 0.25

self.lr_rampup_length = 0.05

self.noise_ramp_length = 0.75

self.regularize_noise_weight = 1e5

self.verbose = verbose

self.clone_net = True

self._Gs = None

self._minibatch_size = None

self._dlatent_avg = None

self._dlatent_std = None

self._noise_vars = None

self._noise_init_op = None

self._noise_normalize_op = None

self._dlatents_var = None

self._noise_in = None

self._dlatents_expr = None

self._images_expr = None

self._target_images_var = None

self._lpips = None

self._dist = None

self._loss = None

self._reg_sizes = None

self._lrate_in = None

self._opt = None

self._opt_step = None

self._cur_step = None

def _info(self, *args):

if self.verbose:

print('Projector:', *args)

def set_network(self, Gs, minibatch_size=1):

assert minibatch_size == 1

self._Gs = Gs

self._minibatch_size = minibatch_size

if self._Gs is None:

return

if self.clone_net:

self._Gs = self._Gs.clone()

# Find dlatent stats.

self._info('Finding W midpoint and stddev using %d samples...' % self.dlatent_avg_samples)

latent_samples = np.random.RandomState(123).randn(self.dlatent_avg_samples, *self._Gs.input_shapes[0][1:])

dlatent_samples = self._Gs.components.mapping.run(latent_samples, None) # [N, 18, 512]

self._dlatent_avg = np.mean(dlatent_samples, axis=0, keepdims=True) # [1, 18, 512]

self._dlatent_std = (np.sum((dlatent_samples - self._dlatent_avg) ** 2) / self.dlatent_avg_samples) ** 0.5

self._info('std = %g' % self._dlatent_std)

# Find noise inputs.

self._info('Setting up noise inputs...')

self._noise_vars = []

noise_init_ops = []

noise_normalize_ops = []

while True:

n = 'G_synthesis/noise%d' % len(self._noise_vars)

if not n in self._Gs.vars:

break

v = self._Gs.vars[n]

self._noise_vars.append(v)

noise_init_ops.append(tf.assign(v, tf.random_normal(tf.shape(v), dtype=tf.float32)))

noise_mean = tf.reduce_mean(v)

noise_std = tf.reduce_mean((v - noise_mean)**2)**0.5

noise_normalize_ops.append(tf.assign(v, (v - noise_mean) / noise_std))

self._info(n, v)

self._noise_init_op = tf.group(*noise_init_ops)

self._noise_normalize_op = tf.group(*noise_normalize_ops)

# Image output graph.

self._info('Building image output graph...')

self._dlatents_var = tf.Variable(tf.zeros([self._minibatch_size] + list(self._dlatent_avg.shape[1:])), name='dlatents_var')

self._noise_in = tf.placeholder(tf.float32, [], name='noise_in')

dlatents_noise = tf.random.normal(shape=self._dlatents_var.shape) * self._noise_in

self._dlatents_expr = self._dlatents_var + dlatents_noise

self._images_expr = self._Gs.components.synthesis.get_output_for(self._dlatents_expr, randomize_noise=False)

# Downsample image to 256x256 if it's larger than that. VGG was built for 224x224 images.

proc_images_expr = (self._images_expr + 1) * (255 / 2)

sh = proc_images_expr.shape.as_list()

if sh[2] > 256:

factor = sh[2] // 256

proc_images_expr = tf.reduce_mean(tf.reshape(proc_images_expr, [-1, sh[1], sh[2] // factor, factor, sh[2] // factor, factor]), axis=[3,5])

# Loss graph.

self._info('Building loss graph...')

self._target_images_var = tf.Variable(tf.zeros(proc_images_expr.shape), name='target_images_var')

if self._lpips is None:

self._lpips = misc.load_pkl(self.vgg16_pkl) # vgg16_zhang_perceptual.pkl

self._dist = self._lpips.get_output_for(proc_images_expr, self._target_images_var)

self._loss = tf.reduce_sum(self._dist)

# Noise regularization graph.

self._info('Building noise regularization graph...')

reg_loss = 0.0

for v in self._noise_vars:

sz = v.shape[2]

while True:

reg_loss += tf.reduce_mean(v * tf.roll(v, shift=1, axis=3))**2 + tf.reduce_mean(v * tf.roll(v, shift=1, axis=2))**2

if sz <= 8:

break # Small enough already

v = tf.reshape(v, [1, 1, sz//2, 2, sz//2, 2]) # Downscale

v = tf.reduce_mean(v, axis=[3, 5])

sz = sz // 2

self._loss += reg_loss * self.regularize_noise_weight

# Optimizer.

self._info('Setting up optimizer...')

self._lrate_in = tf.placeholder(tf.float32, [], name='lrate_in')

self._opt = dnnlib.tflib.Optimizer(learning_rate=self._lrate_in)

self._opt.register_gradients(self._loss, [self._dlatents_var] + self._noise_vars)

self._opt_step = self._opt.apply_updates()

def run(self, target_images):

# Run to completion.

self.start(target_images)

while self._cur_step < self.num_steps:

self.step()

# Collect results.

pres = dnnlib.EasyDict()

pres.dlatents = self.get_dlatents()

pres.noises = self.get_noises()

pres.images = self.get_images()

return pres

def start(self, target_images):

assert self._Gs is not None

# Prepare target images.

self._info('Preparing target images...')

target_images = np.asarray(target_images, dtype='float32')

target_images = (target_images + 1) * (255 / 2)

sh = target_images.shape

assert sh[0] == self._minibatch_size

if sh[2] > self._target_images_var.shape[2]:

factor = sh[2] // self._target_images_var.shape[2]

target_images = np.reshape(target_images, [-1, sh[1], sh[2] // factor, factor, sh[3] // factor, factor]).mean((3, 5))

# Initialize optimization state.

self._info('Initializing optimization state...')

tflib.set_vars({self._target_images_var: target_images, self._dlatents_var: np.tile(self._dlatent_avg, [self._minibatch_size, 1, 1])})

tflib.run(self._noise_init_op)

self._opt.reset_optimizer_state()

self._cur_step = 0

def step(self):

assert self._cur_step is not None

if self._cur_step >= self.num_steps:

return

if self._cur_step == 0:

self._info('Running...')

# Hyperparameters.

t = self._cur_step / self.num_steps

noise_strength = self._dlatent_std * self.initial_noise_factor * max(0.0, 1.0 - t / self.noise_ramp_length) ** 2

lr_ramp = min(1.0, (1.0 - t) / self.lr_rampdown_length)

lr_ramp = 0.5 - 0.5 * np.cos(lr_ramp * np.pi)

lr_ramp = lr_ramp * min(1.0, t / self.lr_rampup_length)

learning_rate = self.initial_learning_rate * lr_ramp

# Train.

feed_dict = {self._noise_in: noise_strength, self._lrate_in: learning_rate}

_, dist_value, loss_value = tflib.run([self._opt_step, self._dist, self._loss], feed_dict)

tflib.run(self._noise_normalize_op)

# Print status.

self._cur_step += 1

if self._cur_step == self.num_steps or self._cur_step % 10 == 0:

self._info('%-8d%-12g%-12g' % (self._cur_step, dist_value, loss_value))

if self._cur_step == self.num_steps:

self._info('Done.')

def get_cur_step(self):

return self._cur_step

def get_dlatents(self):

return tflib.run(self._dlatents_expr, {self._noise_in: 0})

def get_noises(self):

return tflib.run(self._noise_vars)

def get_images(self):

return tflib.run(self._images_expr, {self._noise_in: 0})2.2.2. project_images

project_images.py

# from https://github.com/rolux/stylegan2encoder

import argparse

import os

import shutil

import numpy as np

import dnnlib

import dnnlib.tflib as tflib

import pretrained_networks

import projector #

import dataset_tool

from training import dataset

from training import misc

def project_image(proj, src_file, dst_dir, tmp_dir, video=False):

data_dir = '%s/dataset' % tmp_dir

if os.path.exists(data_dir):

shutil.rmtree(data_dir)

image_dir = '%s/images' % data_dir

tfrecord_dir = '%s/tfrecords' % data_dir

os.makedirs(image_dir, exist_ok=True)

shutil.copy(src_file, image_dir + '/')

#

dataset_tool.create_from_images_raw(tfrecord_dir, image_dir, shuffle=0)

dataset_obj = dataset.load_dataset(

data_dir=data_dir, tfrecord_dir='tfrecords',

max_label_size=0, repeat=False, shuffle_mb=0

)

print('Projecting image "%s"...' % os.path.basename(src_file))

images, _labels = dataset_obj.get_minibatch_np(1)

images = misc.adjust_dynamic_range(images, [0, 255], [-1, 1])

proj.start(images)

if video:

video_dir = '%s/video' % tmp_dir

os.makedirs(video_dir, exist_ok=True)

while proj.get_cur_step() < proj.num_steps:

print('\r%d / %d ... ' % (proj.get_cur_step(), proj.num_steps), end='', flush=True)

proj.step()

if video:

filename = '%s/%08d.png' % (video_dir, proj.get_cur_step())

misc.save_image_grid(proj.get_images(), filename, drange=[-1,1])

print('\r%-30s\r' % '', end='', flush=True)

os.makedirs(dst_dir, exist_ok=True)

filename = os.path.join(dst_dir, os.path.basename(src_file)[:-4] + '.png')

misc.save_image_grid(proj.get_images(), filename, drange=[-1,1])

filename = os.path.join(dst_dir, os.path.basename(src_file)[:-4] + '.npy')

np.save(filename, proj.get_dlatents()[0])

def render_video(src_file, dst_dir, tmp_dir, num_frames, mode, size, fps, codec, bitrate):

import PIL.Image

import moviepy.editor

def render_frame(t):

frame = np.clip(np.ceil(t * fps), 1, num_frames)

image = PIL.Image.open('%s/video/%08d.png' % (tmp_dir, frame))

if mode == 1:

canvas = image

else:

canvas = PIL.Image.new('RGB', (2 * src_size, src_size))

canvas.paste(src_image, (0, 0))

canvas.paste(image, (src_size, 0))

if size != src_size:

canvas = canvas.resize((mode * size, size), PIL.Image.LANCZOS)

return np.array(canvas)

src_image = PIL.Image.open(src_file)

src_size = src_image.size[1]

duration = num_frames / fps

filename = os.path.join(dst_dir, os.path.basename(src_file)[:-4] + '.mp4')

video_clip = moviepy.editor.VideoClip(render_frame, duration=duration)

video_clip.write_videofile(filename, fps=fps, codec=codec, bitrate=bitrate)

def main():

parser = argparse.ArgumentParser(description='Project real-world images into StyleGAN2 latent space')

parser.add_argument('src_dir', help='Directory with aligned images for projection')

parser.add_argument('dst_dir', help='Output directory')

parser.add_argument('--tmp-dir', default='.stylegan2-tmp', help='Temporary directory for tfrecords and video frames')

parser.add_argument('--network-pkl', default='http://d36zk2xti64re0.cloudfront.net/stylegan2/networks/stylegan2-ffhq-config-f.pkl', help='StyleGAN2 network pickle filename')

parser.add_argument('--vgg16-pkl', default='http://d36zk2xti64re0.cloudfront.net/stylegan1/networks/metrics/vgg16_zhang_perceptual.pkl', help='VGG16 network pickle filename')

parser.add_argument('--num-steps', type=int, default=1000, help='Number of optimization steps')

parser.add_argument('--initial-learning-rate', type=float, default=0.1, help='Initial learning rate')

parser.add_argument('--initial-noise-factor', type=float, default=0.05, help='Initial noise factor')

parser.add_argument('--verbose', type=bool, default=False, help='Verbose output')

parser.add_argument('--video', type=bool, default=False, help='Render video of the optimization process')

parser.add_argument('--video-mode', type=int, default=1, help='Video mode: 1 for optimization only, 2 for source + optimization')

parser.add_argument('--video-size', type=int, default=1024, help='Video size (height in px)')

parser.add_argument('--video-fps', type=int, default=25, help='Video framerate')

parser.add_argument('--video-codec', default='libx264', help='Video codec')

parser.add_argument('--video-bitrate', default='5M', help='Video bitrate')

args = parser.parse_args()

print('Loading networks from "%s"...' % args.network_pkl)

_G, _D, Gs = pretrained_networks.load_networks(args.network_pkl)

proj = projector.Projector(

vgg16_pkl = args.vgg16_pkl,

num_steps = args.num_steps,

initial_learning_rate = args.initial_learning_rate,

initial_noise_factor = args.initial_noise_factor,

verbose = args.verbose

)

proj.set_network(Gs)

src_files = sorted([os.path.join(args.src_dir, f) for f in os.listdir(args.src_dir) if f[0] not in '._'])

for src_file in src_files:

project_image(proj, src_file, args.dst_dir, args.tmp_dir, video=args.video)

if args.video:

render_video(

src_file, args.dst_dir, args.tmp_dir, args.num_steps, args.video_mode,

args.video_size, args.video_fps, args.video_codec, args.video_bitrate

)

shutil.rmtree(args.tmp_dir)

if __name__ == '__main__':

main()2.3. 人脸卡通化

import numpy as np

from PIL import Image

import matplotlib.pyplot as plt

import dnnlib

import dnnlib.tflib as tflib

from pathlib import Path

latent_dir = Path("generated")

latents = latent_dir.glob("*.npy")

for latent_file in latents:

latent = np.load(latent_file)

latent = np.expand_dims(latent,axis=0)

synthesis_kwargs = dict(output_transform=dict(func=tflib.convert_images_to_uint8, nchw_to_nhwc=False), minibatch_size=8)

images = Gs_blended.components.synthesis.run(latent, randomize_noise=False, **synthesis_kwargs)

Image.fromarray(images.transpose((0,2,3,1))[0], 'RGB').save(latent_file.parent / (f"{latent_file.stem}-toon.jpg"))

#

embedded = Image.open(filename="generated/example_01.png")

tooned = Image.open(filename="generated/example_01-toon.jpg")

plt.figure()

plt.subplot(121)

plt.imshow(embedded)

plt.axies('off')

plt.subplot(122)

plt.imshow(tooned)

plt.axies('off')

plt.show()效果如图: